Three things this week. Europol ran its second multinational OSINT sprint in 14 months, this time against the deportation of Ukrainian children, and the format itself is the story. The European Commission's DSA rapid-response mechanism has now been used through two national elections without a published methodology for evaluating it, and the field should be pushing back on that. Hungary and Bulgaria voted within eight days of each other, and the results read as a mixed verdict for the FIMI frame, which is a problem for the analytic frame rather than for the verdict. Section 01 starts with the Europol sprint.

The issue also resolves the SkyOSINT test promised in #001. Full test, methodology, and assessment live in the From Signal & Shadow section below, which runs longer than the sections above it this week. That is deliberate.

Europol's second OSINT sprint normalises an operational format

Forty investigators, eighteen countries, two days. Europol hosted the team at The Hague on 16 and 17 April for a coordinated OSINT effort to identify and trace children forcibly transferred from Ukraine to Russia, Belarus, and the temporarily occupied territories. The team produced 45 reports, each containing leads that could help locate a specific child or identify individuals and structures involved in the transfer. The material covered possible transport routes, enablers such as orphanage directors, military units that may have assisted, facilities where children were taken (re-education camps, psychiatric hospitals, in some cases adoptive Russian families), and online platforms hosting photographs of the children. The output was handed to Ukrainian authorities to support prosecutions that will run through both Ukrainian courts and the ICC.

The 45 reports are the headline. The operational format is the story. This is the second time Europol has run a time-boxed multinational OSINT sprint with ICC and NGO participation. The first was in February 2025, targeting networks using online platforms to traffic Ukrainian nationals for sexual exploitation; that sprint involved 12 countries. The April 2026 sprint involved 18. The format is being normalised as an institutional operational mode: assemble the capacity in one location for a short, structured window, produce discrete leads attached to named individuals and locations, hand them to a prosecutorial pipeline. This is not how institutional OSINT has typically been done in Europe before 2024. It is closer to the way NGO investigations like Bellingcat, OSINT For Ukraine, and the Centre for Information Resilience have worked for a decade, now operating inside Europol's institutional structure and with ICC coordination.

Working analysts and investigative journalists who may be invited into the next iteration or a comparable sprint. The format rewards preparation: arrive with documented methodology, retain your own evidence chain, agree attribution protocols with the coordinating body in writing before the sprint begins. The output is prosecutorial in ambition, which raises the evidentiary bar on everything produced.

What to watch for is whether the format holds. Two sprints in 14 months is a pattern, not yet a programme. Europol has not published whether the April output materially advanced specific Ukrainian prosecutions or ICC cases, and the February 2025 output was similarly opaque in follow-through. If a third sprint runs before autumn, the format is real. If the next 12 months produce a documented prosecution that rests on sprint-generated leads, the format is vindicated. Until either happens, treat the format as promising and under-evaluated.

The DSA rapid-response mechanism has been activated twice without a published evaluation methodology, and the field should push back

Forty-four signatories, voluntary by design. The European Commission announced the activation of the Digital Services Act's rapid-response mechanism for the Hungarian parliamentary election on 16 March 2026, with the signatories having agreed the activation the previous Friday. Commission spokesperson Thomas Regnier confirmed the activation at the midday press briefing, describing the mechanism as a voluntary system under which major platforms including TikTok and Meta would coordinate with fact-checkers and civil society organisations to flag potential interference and disinformation campaigns. The mechanism remained active until one week after the 12 April vote, taking it through to 19 April, the same day Bulgarians voted in their own parliamentary election. Orbán lost. The mechanism had been activated once before, for the 2024 Romanian presidential election, where TikTok's handling was subsequently cited in the Commission's case against the platform.

Both sides will claim vindication from the Hungarian result. Neither claim is supportable on the record. The pro-mechanism read is that the rapid-response system helped deliver a clean election outcome. This cannot be sustained without evidence that specific flagged content would otherwise have shifted the result, that the flagging was proportionate and procedurally sound, and that the effect can be distinguished from the underlying political conditions: record turnout, sustained corruption grievance, a credible opposition candidate who built his own counter-narrative infrastructure. No such evidence has been published. The Commission's own first-round notice under the Code of Conduct on Disinformation explicitly does not assess whether the actions taken by signatories were sufficient, which is the data point that would matter most for an evaluation.

The critical read is that Brussels interfered in a domestic election. This is weaker than it sounds on first hearing because the mechanism is voluntary by design, with no binding takedown authority, but the structural concern survives the voluntariness. A voluntary mechanism still requires published evaluation criteria to be assessable. Without those criteria, neither participants nor critics can distinguish flagging that was domain-legitimate (clearly fabricated content, coordinated inauthentic behaviour with evidentiary trails) from flagging that was politically coloured. The mechanism has now been used twice without that methodology in the public record. The field should not let it be used a third time on the same terms.

The next two Commission rapid-response activations are the data points that matter. Track them, document them, and build a comparable case record before the mechanism is deployed for the next cycle of European elections. The methodological question is not whether the mechanism worked. It is what working would look like, in evidence anyone can assess.

Practitioner instruction: when reporting on a rapid-response activation, do not accept the activation as evidence of the mechanism's necessity, and do not accept the post-vote outcome as evidence of its effectiveness. Both moves are independent of the mechanism's existence. The reporting question is whether the signatories produced a methodology log that an outside investigator could audit, and what that log shows.

Hungary and Bulgaria together show the FIMI frame is doing less work than it claims

Two elections, eight days apart. One reads as a win for the pro-European narrative, one reads as a loss. Magyar's Tisza defeated Orbán in Hungary on 12 April with a two-thirds parliamentary supermajority. Radev's Progressive Bulgaria won 44.7 per cent on 19 April. The easy read is that the democratic-backsliding frame got a mixed verdict. The read past the reported facts is that the framing itself, "pro-Kremlin versus pro-European", is a poor analytic frame for what voters were responding to.

In Hungary, Magyar ran against corruption and institutional capture. In Bulgaria, Radev ran against corruption and institutional instability. Voters in both countries rejected incumbents on domestic-governance grounds; the foreign-policy alignment followed, rather than led. The FIMI frame, as imported from think-tank and EEAS sources, predicts that exposure to pro-Kremlin messaging shifts votes toward pro-Kremlin outcomes. Hungary and Bulgaria delivered opposite outcomes inside the same eight-day window with comparable exposure environments. The frame does not predict what happened. The domestic-governance frame does.

This matters for OSINT and journalism work because the analytic frames the field imports from think-tank sources can obscure the domestic drivers that do more of the explanatory work. When a field imports a frame that predicts poorly, it spends investigative resources on the wrong questions, hands rhetorical leverage to political actors who can point at the predictive failures, and weakens the case for the work the frame does explain well (coordinated inauthentic behaviour, attributable foreign-state operations, narrative-laundering networks).

The methodology question

Before assessing the next election through the foreign-interference lens, establish the domestic-governance baseline first. Specifically: what are the top three voter-stated grievances in pre-election polling, what is the institutional-trust trajectory over the prior 24 months, and what are the credible domestic counter-narratives in circulation. With that baseline in hand, the analytic question for the foreign-interference layer becomes whether observed external operations are amplifying existing grievance or attempting to introduce new narratives, and whether they are reaching audiences who would otherwise be reachable by domestic actors. Both layers are real. Both are present. The order of operations is the methodology.

The open question for the next piece of reporting: which OSINT methods best separate domestic grievance drivers from externally amplified narratives, given both are real and both are present? The frame that answers that question well is the frame the field should use. The frame that does not is the frame the field should let go of.

This is a Shadow argument, not a verdict. The methodology proposal is the durable output. The Hungary-Bulgaria comparison is the case in front of us; the next two European elections (the data points the Signal flagged above) are where the proposal can be tested.

| EUvsDisinfo | Disinformation Review, 17 April: Hungary and Bulgaria framed as Kremlin-targeted. Worth reading as the source the read above argued against. The FIMI frame is doing real work in the ecosystem; the question is where it stops explaining. |

| Wikipedia + CEC |

Hungary and Bulgaria vote within eight days. Magyar's Tisza defeats Orbán on 12 April with a two-thirds parliamentary supermajority; Radev's Progressive Bulgaria wins 44.7 per cent on 19 April. The read above argued the frame matters more than either result. |

| Maltego | Maltego consolidates its platform as Maltego One, announced 17 March. Graph, Search, Monitor, Evidence, and Hunchly now sit under a single platform. Worth knowing if you missed it; the tooling consolidation story in institutional OSINT has been slow and now has a concrete artefact to track. |

| i-intelligence | Aleksandra Bielska publishes a list of privacy-preserving frontends for major platforms. A useful consolidated reference for quick checks without an account, though the practitioner question is which of these mirrors actually preserve the privacy properties they claim, and which are fragile to platform countermeasures. Worth saving; worth stress-testing before integrating into an investigation workflow. |

SkyOSINT, tested

Following last week's note on the platform, I ran two recently documented orbital events through SkyOSINT on 21 April. The question from #001 still stands: corroboration layer, or lead-generation layer. The test was designed to answer that question against events already in the specialist record, using queries any newsroom user would attempt.

Case one. The classified Soyuz-2-1b launch from Plesetsk on 16 April UTC, catalogued as international designator 2026-083. US Space Force tracking had grown to 10 catalogued objects by 18 April across two distinct orbital planes, with a rare Volga upper stage enabling the multi-plane insertion. Jonathan McDowell's General Catalog covers the mission and flags the NORAD ID gaps where additional objects had been detected but not yet formally listed. The launch is exactly the kind of event the platform's marketing implies it should surface: fresh, classified, with a specialist reporting trail already building out.

Case two. Shijian-25, the Chinese GEO spacecraft flagged in the CSIS GEO study covered by Breaking Defense on 7 April. SJ-25 executed a rendezvous and refuelling manoeuvre with SJ-21 in June 2025, tracked by independent sky watchers and by Slingshot Aerospace and COMSPOC at the time. No orbital parameters for SJ-25 have been published by either the US or Chinese governments. The object sits in the specialist record and outside the formal public catalogue, which is a stress test for a platform whose data lineage runs through Space-Track and CelesTrak.

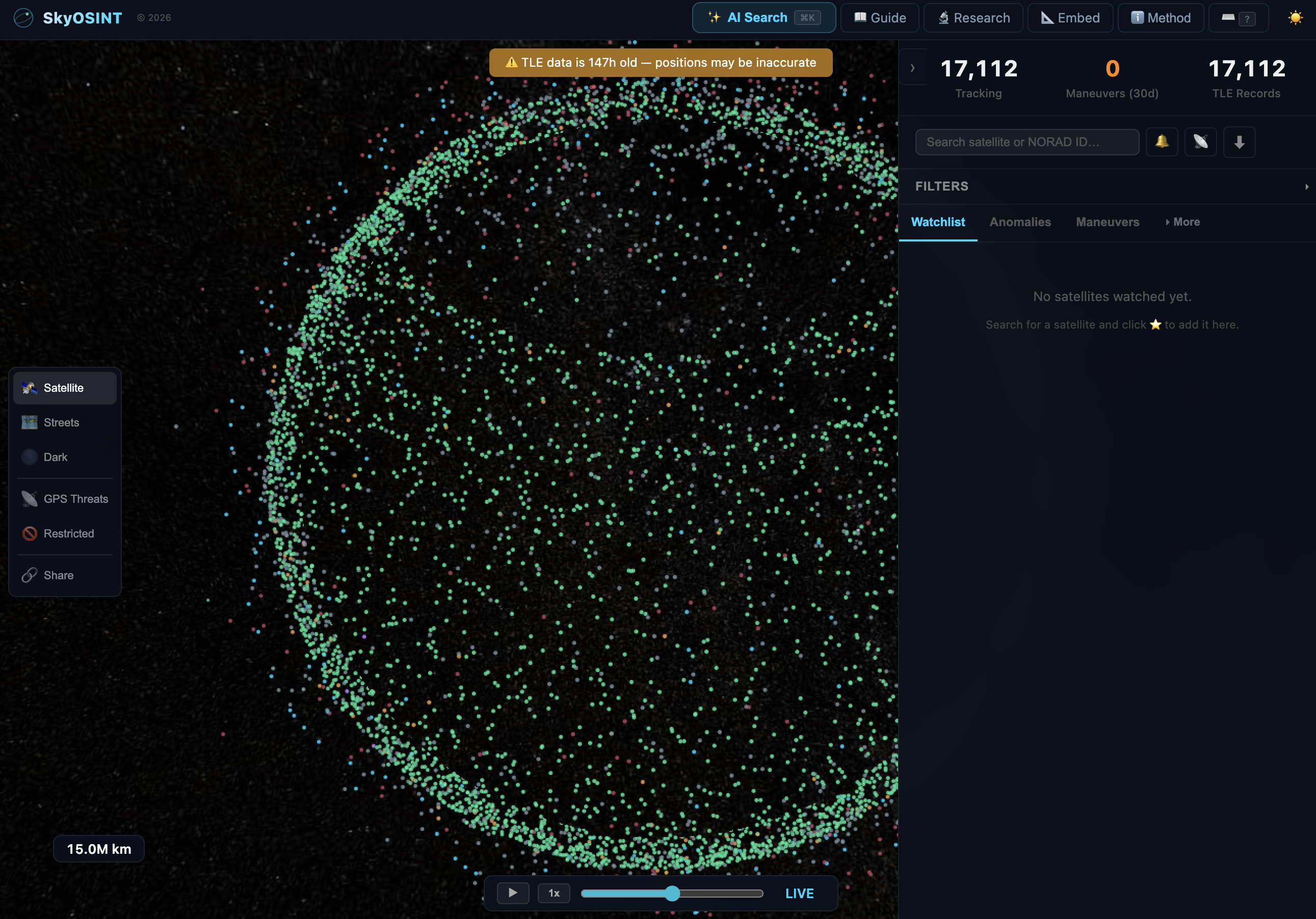

The platform returned zero matches on the newly catalogued NORAD IDs from the Plesetsk launch through both the AI Search and the direct lookup, five days after launch. Zero matches on "SJ-25" and "Shijian-25". For reference, NORAD 25544 (the International Space Station) resolved correctly, which confirms the parser works against mature catalogue objects. The platform's own header strip read 17,112 tracked, 17,112 TLE records, and zero manoeuvres detected in the last 30 days. One TLE per object leaves nothing to compare against, which is why the detector is idle.

Use the platform as a catalogue browser for established objects and as a display layer for the Stanford GPS interference feed, which functioned correctly over Kaliningrad during the test. Do not use it as a lead-generation tool for fresh events, and do not rely on it as a standalone corroboration layer. Cross-check against McDowell's GCAT and commercial providers such as LeoLabs, Slingshot, or COMSPOC before publication.

The #001 framing holds with one refinement. The platform is a verification tool, not a discovery tool, but the verification it supports is bounded to objects and patterns already in the mature Space-Track catalogue. For fresh events, the platform is neither lead-generation nor corroboration; it is silent. This is not a failure against its own methodology block, which scopes the tool to OSINT use over public TLE data. It is a mismatch between what the launch-week framing implied and what the data architecture can deliver. That mismatch is the story.

The work continues.

Derek

The Signal is the weekly intelligence briefing of Signal & Shadow, the independent forensic OSINT investigation and methodology practice of Derek Bowler. Published every Thursday at 07:00 CET. Three categories: Intercept (caught clean from the week's noise), Signal (a confirmed call with weight), Shadow (the read past the reported facts).

Membership at signalandshadow.io/upgrade. The Signal is delivered in full to every subscriber regardless of tier.